There is a particular kind of post-launch problem that global L&D teams are well aware of.

The programme rolled out on time. Completion rates look fine. Assessment pass rates are broadly acceptable. And yet something isn’t quite working. Maybe learners in certain markets are raising questions that the training was supposed to answer. Or, local managers are telling you the content does not feel right, though they struggle to say exactly why. Perhaps engagement is down, or morale is low, or productivity isn’t hitting your global benchmark.

More often than not, when teams look back carefully, translation is somewhere in the picture.

Not bad translation in the obvious sense; I’m not talking about the kind of error that makes it into a screenshot that someone is going to take the time to send to you. I’m talking about something much subtler; content that is technically accurate but emotionally flat, or that uses terminology that is slightly off for the target audience, or that carries a tone that reads as authoritative in English but oddly bossy in the target language. The kind of problem that a qualified reviewer would catch immediately, but that nobody thought to put in front of one.

The faulty assumption that causes most of the trouble

Most organisations with a global learning programme make one foundational assumption that quietly undermines the quality of what they produce: they treat localisation as a production task rather than a design decision.

Under this assumption, translation occurs after the real decisions have been made; English content goes in, translated content comes out. The workflow is the same regardless of whether the content is a safety certification or a reading list recommendation.

This makes a certain kind of administrative sense; there’s no doubting that it’s simpler to manage one process than three. But it means the translation approach is never actually aligned with what the content needs to do, who will use it, or what happens if the language is imprecise.

When localisation is treated as a design decision, the questions change. Instead of “how do we translate this programme?“, you start asking “what does this specific content need from a translation workflow?” and “how much do we stand to lose if the translation’s a bit off here?“. Those questions produce different answers depending on the content, and the answers should shape the approach.

Why learning content is particularly unforgiving

In most content categories, poor translation produces a visible problem. A mistranslated strapline, a confusing product description, and an error in a customer-facing notification; all of these would tend to surface quickly.

Learning content often doesn’t work that way. A learner, perhaps themselves a new employee, who encounters an ambiguously translated assessment question doesn’t usually raise a flag. They make their best guess and maybe even assume the poor understanding sits with them. Often, cultural forces are at play, making it a no-no to give direct feedback.

There is also the question of instructional intent. Learning design is precise work. The sequence in which information is presented, the way scenarios are framed, and the register in which feedback is written are all deliberate choices that affect how well learning transfers. A translation that is technically accurate but tonally or structurally wrong for the target language can erode the instructional logic of a carefully built programme.

Three ways to think about your content before you decide how to translate it

The most useful thing any L&D team can do before making localisation decisions is to categorise their content. Not by format, and not by programme, but by what each piece of content does and what the cost of getting it wrong is.

Three questions tend to cut through most of the complexity.

How central is this content to the learner journey? There is a meaningful difference between content that sits at the core of a learning experience, for example, assessed modules, certification pathways, structured skill-building programmes, and content that plays a supporting role, like reference materials, LMS interface text, and job aids used in the flow of work. Core content affects outcomes directly, while supporting content affects the experience. Both matter, but they do not carry equal weight when something goes wrong.

How much does the quality of the language affect whether learning happens? Some learning content is primarily informational. Its job is to convey facts accurately, and if the translation is accurate, the content does its job. Other content is more nuanced. The way a concept is explained, the way a scenario is framed, and the way feedback is worded: these choices affect whether a learner understands, retains, and can apply what they have been taught.

What is the consequence if a learner misunderstands something? This is where compliance programmes, safety-critical training, and formally assessed content sit apart from everything else. A learner who misunderstands a soft skills module may be slightly less clear in a meeting. A learner who misunderstands a fire safety procedure, a data-handling obligation, or a clinical protocol is in an entirely different situation. The translation approach needs to reflect that.

Matching translation method to content type

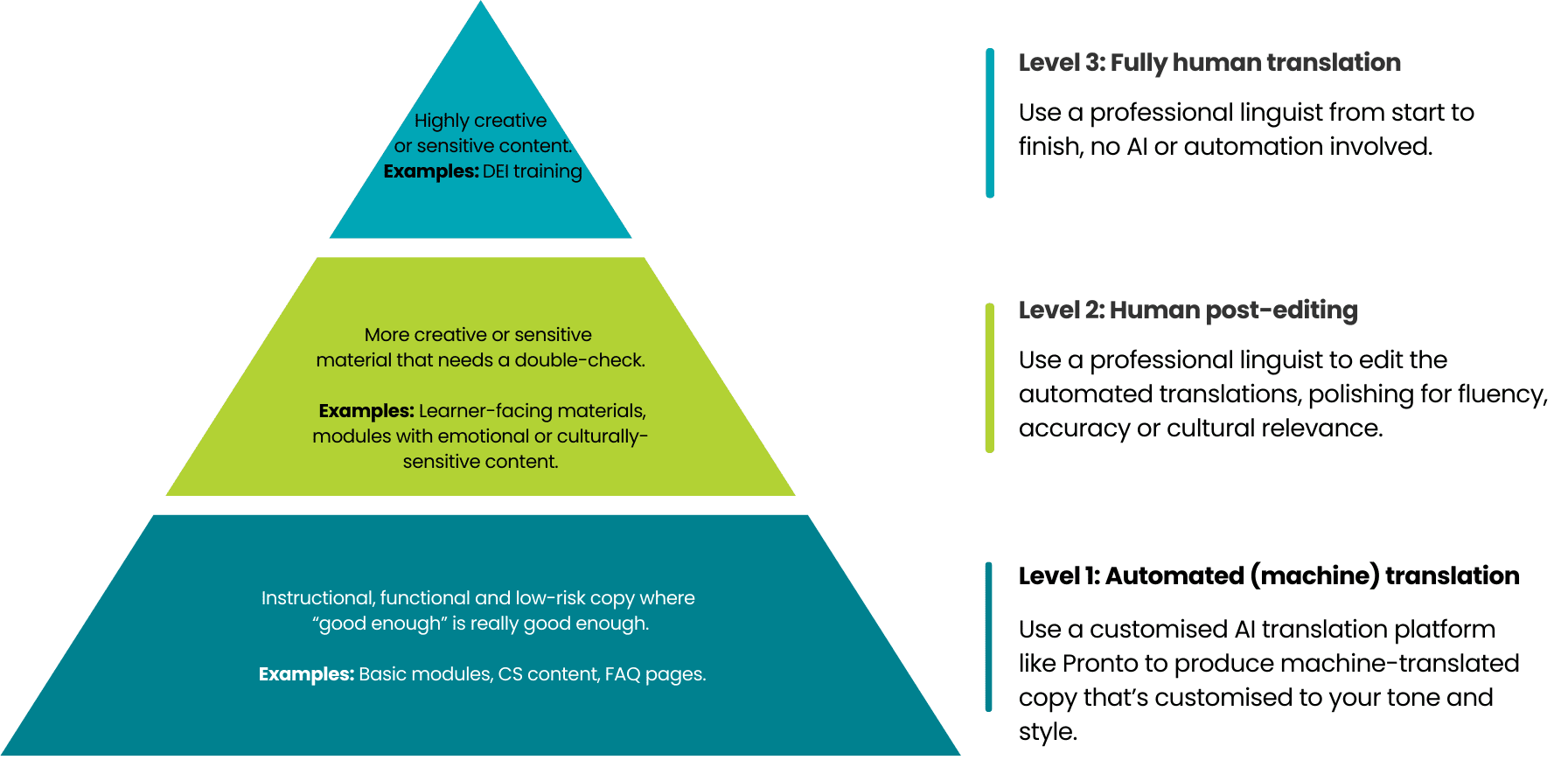

Once you have a clearer picture of what your content needs, the translation options start to make more sense as a spectrum rather than a menu.

Level 1: AI-led machine translation with automated quality checks (MTAP) is a genuinely effective approach for high-volume, low-instructional-complexity, and low-risk content. Learner notifications, reference materials, and practice-based knowledge checks are all reasonable candidates, provided the underlying AI configuration has been set up properly for your brand’s terminology and domain.

Level 2: AI-led machine translation with human post-editing (MTPE) is where most learning content, if approached thoughtfully, probably belongs. The AI handles the linguistic heavy lifting, while a qualified linguist with relevant subject matter knowledge reviews the output for accuracy, consistency, tone, and instructional clarity. Onboarding programmes, product knowledge training, procedural content, and soft skills programmes all benefit from the efficiency of a hybrid approach without carrying the risk level that would require something more rigorous.

Level 3: Expert, fully human translation is the right approach for content where precision is non-negotiable. Formally assessed programmes, compliance and regulatory training, safety-critical content, and any content where a translation error could produce a legal, regulatory, or learner-safety consequence.

The language dimension that most localisation plans underestimate

Content type sits on one axis, and language on the other, and both deserve equal attention.

Machine translation does not perform equally well across all language pairs. Languages with large volumes of high-quality training data, such as French, German, and Spanish, produce reliable, reviewable AI output. Languages with smaller datasets, or those with significant structural distance from English, produce output that is less consistent and harder to evaluate without strong in-language expertise.

For L&D teams, this has a practical implication. An AI-led workflow that works well for your French content may not be appropriate for your Hindi or Arabic content, even if the content itself sits at the same point on the criticality scale. Language suitability needs to be assessed independently of content type, and the two should be considered together before any workflow decisions are made.

How to get started

The Learning Localisation Scorecard is a free, interactive tool that takes you through exactly this process. You identify the types of learning content you regularly localise, rate each type against the three dimensions above, and add the languages you need. The tool provides a personalised report outlining the recommended approach for each combination.

It takes around 10 to 15 minutes, with no preparation needed beforehand.

Some teams find they have been spending more than necessary on content well-suited to a faster workflow. Others find they have compliance or assessed content that would benefit from a more rigorous approach than it’s currently receiving. Either way, you leave with something concrete to work with.

If you’d like to talk through your conclusions with us afterwards, we would be very glad to do so. No obligation and no sales pitch: just a straight conversation about what the results mean for your specific context.