We took a real Comtec blog article and translated it into German using three service levels: AI, AI with human oversight, and fully human translation. A professional linguist from our team then reviewed each version side by side and documented exactly what changed at every stage and why.

The results are more nuanced than “AI is good enough” or “only humans will do.” What the content needs to achieve matters more than the approach you use, and this case study shows what that looks like in practice.

If you’d like to see the same exercise done on your own content, we’d be happy to run a free sample comparison. More on that at the end of this article.

What we did, and why

Rather than creating a hypothetical example, we chose a short section from an existing Comtec blog article and applied three distinct service levels to the same source text:

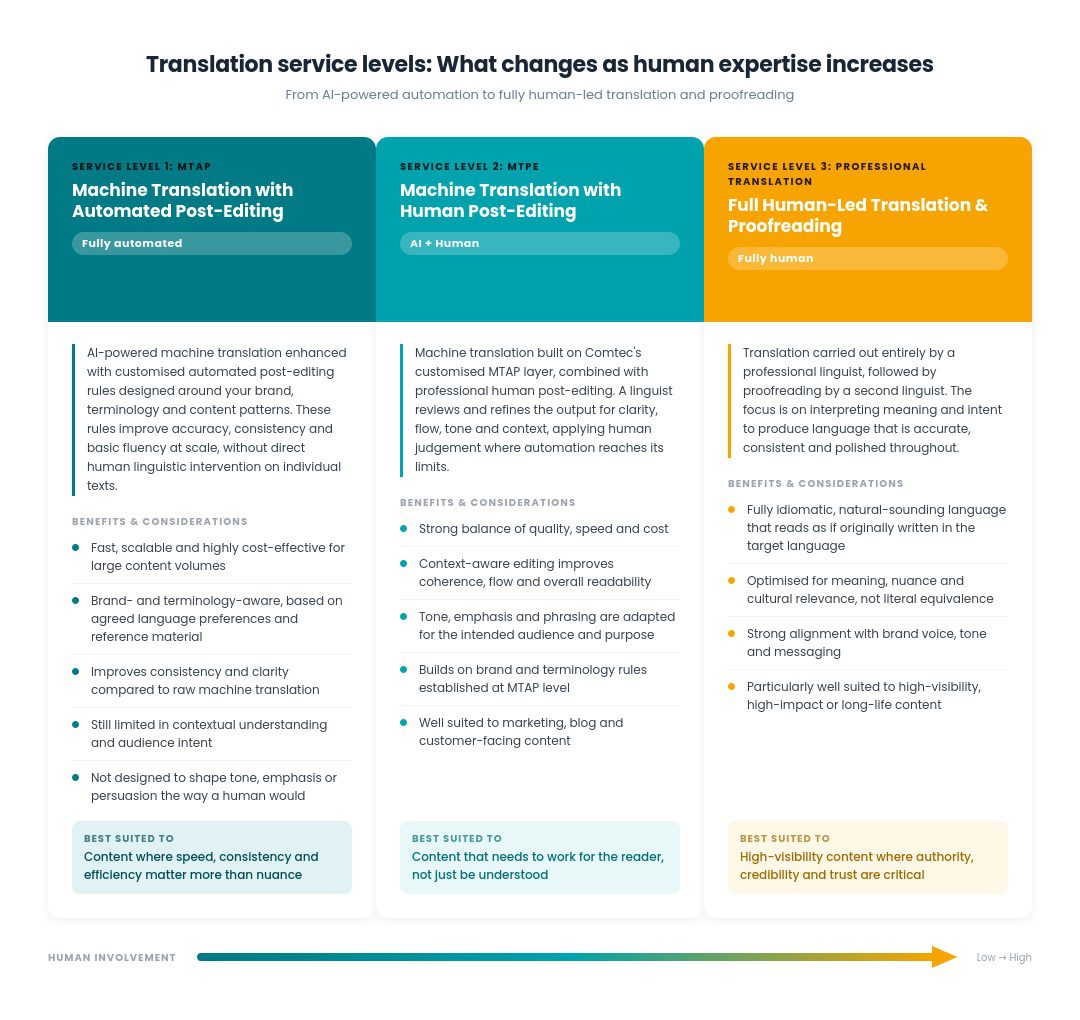

- MTAP — Machine Translation with Automated Post-Editing. Full AI translation enhanced with custom rules built around brand terminology, style preferences and content patterns. No human touches the individual text.

- MTPE — Machine Translation with Human Post-Editing. Here, we use the same AI and automated layer as MTAP, but a professional linguist then reviews and refines the output for clarity, flow, tone and context.

- Full human translation and proofreading. A professional linguist translates from scratch, and a second linguist then proofreads.

The table below breaks down how each service level works and what it delivers.

With that context in place, here’s what our linguist found after reviewing the actual outputs.

The three service levels in action

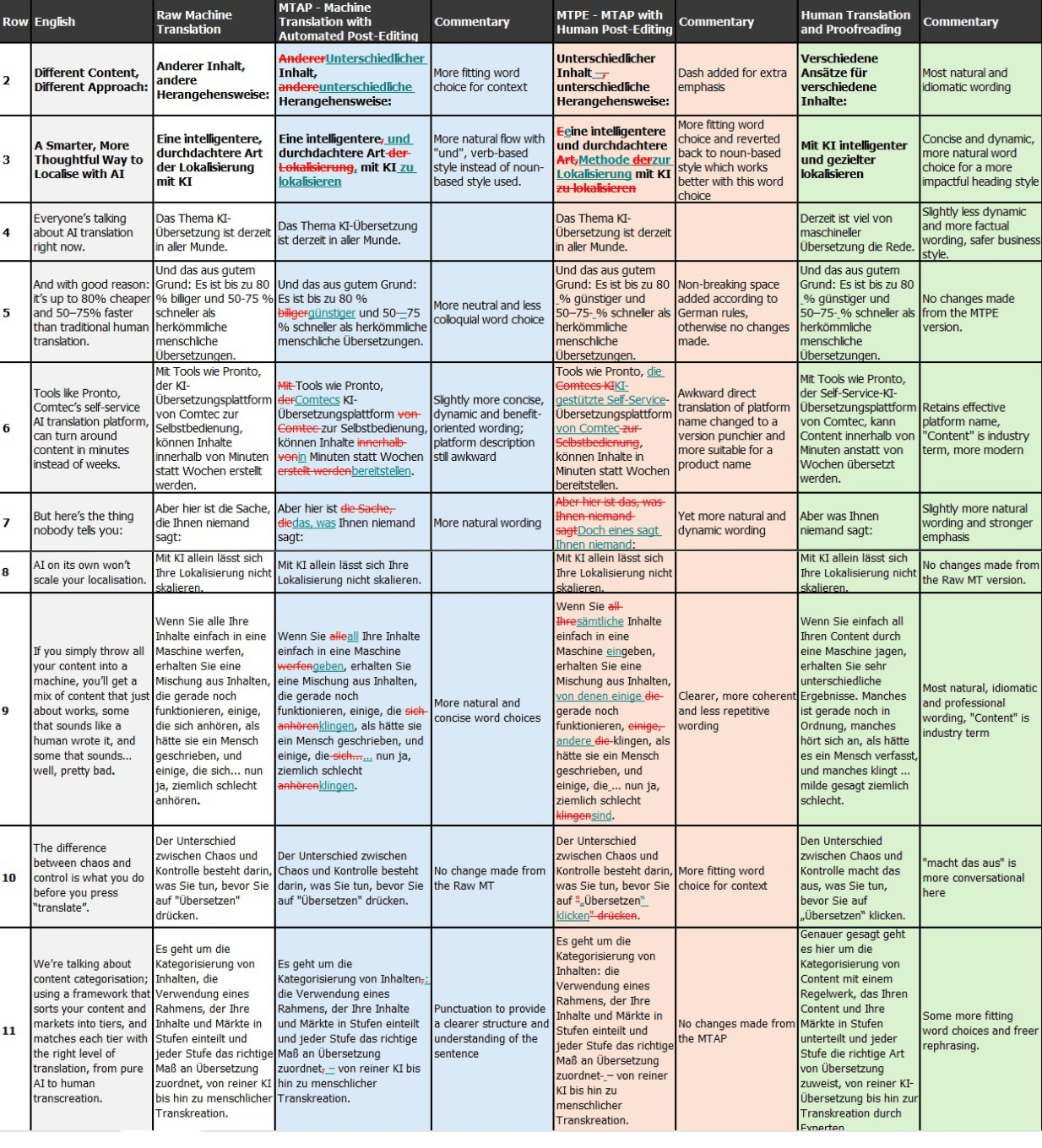

The table below is taken directly from a real working document, the actual notes our very experienced linguist produced while reviewing each translation. Nothing has been polished or summarised. What you’re seeing is a short section of the source article translated three ways, with the linguist’s own commentary on every change they made and why.

A snapshot of the key differences our linguist found when reviewing how the same piece of text was translated via full AI, fully human translation and a hybrid approach.

And just a quick note on column 3, “Raw Machine Translation”. This is what you get when you feed content directly into an AI translation engine with no configuration, no brand rules and no quality controls. It’s the baseline.

We’ve included it because it makes the value of MTAP immediately visible; even the first service level represents a meaningful improvement over unprocessed AI output.

What you get with MTAP: the fully automated option

We say that MTAP is fully automated because after the initial setup, where a linguist trains an AI on your brand rules, terminology and style guide, there’s no human involvement in any particular piece of text.

At first glance, a lot of the MTAP output looks fine. Short, factual headings come through cleanly, for example, in row 2, “Different Content, Different Approach“, becomes “Anderer Inhalt, andere Herangehensweise“, which does the job perfectly well.

The cracks show when the text gets conversational. Take row 9:

English: “If you simply throw all your content into a machine…”

MTAP output: “…alle Ihre Inhalte in eine Maschine werfen…“

The meaning is there, but the phrasing is extremely literal and doesn’t reflect how a German native would naturally express the idea.

Similar patterns appear in row 7, where “But here’s the thing nobody tells you” becomes “Aber hier ist die Sache, die Ihnen niemand sagt“. The word “Sache” is a direct translation of “thing“, but it carries a colloquial weight in German that feels out of place in professional content.

And in row 11, sentence structures that closely mirror the English result in constructions that feel long-winded in German.

The general conclusion is that MTAP is operationally strong; the linguist-defined prompts and rules that drive the post-editing phase help to improve accuracy, consistency and fluency at scale. But overall, it works sentence by sentence – it doesn’t interpret intent, shape rhetoric, or adapt tone to an audience.

For customer-facing content, that could be a real limitation.

What changes with MTPE, the hybrid approach where a linguist reviews the AI’s output

Our linguist noticed that human post-editing noticeably changes the character of the translation, not just its surface accuracy.

For example, in row 6, the platform description “Comtecs KI-Übersetzungsplattform von Comtec zur Selbstbedienung“, already awkward in the MTAP version, is replaced with a more natural expression that uses the established English loanword “Self-Service“, which helps it sound more modern. For a blog aimed at SaaS and marketing professionals, this is a much better translation.

In row 9, the linguist replaces “in eine Maschine werfen“, which is vivid, but too rough for professional communication, with a clear, factual and professional statement that conveys the same meaning without the colloquial edge.

And in row 10, the direct translation of the verb “press” is replaced by “klicken“, which is simply the more appropriate word for this digital context.

The bottom line?

At this level, the text flows naturally, addresses the reader directly, and feels written rather than processed. For blog content in particular, and marketing content more generally, MTPE frequently delivers the best balance of natural flow, reader engagement and cost efficiency.

Full human translation: What value does a professional linguist add?

The fully human translation changes the most from the raw machine translation baseline, and that’s the point.

In row 3, where the blog title “A Smarter, More Thoughtful Way to Localise with AI” had already been refined in the MTPE version to use “Methode” (a more structured and professional choice than “Art“), the human translator goes further still. The final title is shorter, more concise and more dynamic. The addition of “gezielter” introduces a strategic nuance not explicitly present in the English, sharpening the message in a way that feels modern, confident and punchy.

Given this is the opening hook, and probably the one shot you get at driving behaviour (i.e. securing an email open or a link click), the investment in translation quality here would almost certainly pay off.

Looking at rows 9 to 11 together, the shift is consistent; the human translator moves beyond sentence-level accuracy to focus on meaning, emphasis and readability as a whole.

For example, where the MTPE version used “in eine Maschine eingeben“, precise and professional, but arguably toning down the strength of the original, the human translation replaces this with a more vivid and widely understood metaphor. It’s slightly more expressive and more likely to engage the reader.

The finished sample reads as if originally written in German, the benchmark for high-visibility content.

Which approach is right for you?

Different content carries different levels of risk, visibility and commercial impact. Treating it all the same usually means overspending in some areas and under-investing in others.

The right question isn’t simply: Is AI translation good enough?

It’s: What does this content need to achieve, and what level of human involvement best supports that?

When you approach localisation that way, you can scale efficiently where speed matters, protect brand voice where it counts, and invest budget where it has the most impact.

See the difference in your own content, for free.

If you’d like to see how this works in practice, we’ll run a short sample comparison using a piece of your own content. We’ll apply MTAP, MTPE, and full human translation side by side, walk you through the changes at each stage, and explain why.

It’s the clearest way to understand which level of human involvement makes sense for your content, rather than deciding in the abstract. Drop me an email or get in touch with us to request this service; it’s completely free of charge for one language and up to 1,000 words.